Code generation tools are powerful and can significantly accelerate development work. Their main limitation is not capability, but context. Without access to organizational knowledge, internal conventions, and system-specific patterns, generated output often requires careful verification.

This is why generation tools work best when paired with AI code search, as the latter provides immediate visibility into the existing codebase, making it easier to align AI-generated changes with the realities of the system.

In regulated environments, the adoption model may look different. Security or compliance constraints can restrict the use of cloud-based code generation. AI code search still improves developer efficiency across implementation, review, and documentation workflows by enabling fast navigation and comprehension of large multi-repository codebases.

What is AI code intelligence, and how does it help in practice?

Code intelligence tools, help developers find and understand existing code. If a search returns a poor result, the developer simply searches again. Nothing changes in your codebase.

Code search also integrates without friction. No new review processes, no changes to CI/CD, no new permissions. Generation tools require policies for AI-written code that stall many pilots before they produce data.

Clear metrics for measuring AI code intelligence

An AI code search assistant only reads your code, which makes it much easier to measure its impact. You can track simple things like:

• how long it takes to find the right piece of code

• how quickly new developers get up to speed

• how many hours the team spends searching each week.

If your team of 20 developers each spends 5 hours weekly understanding code, that equals 100 hours of engineering time. At $75 per hour, that’s $360,000 per year. A 10% reduction recovers $36,000, a realistic input for an AI ROI framework for tech teams.

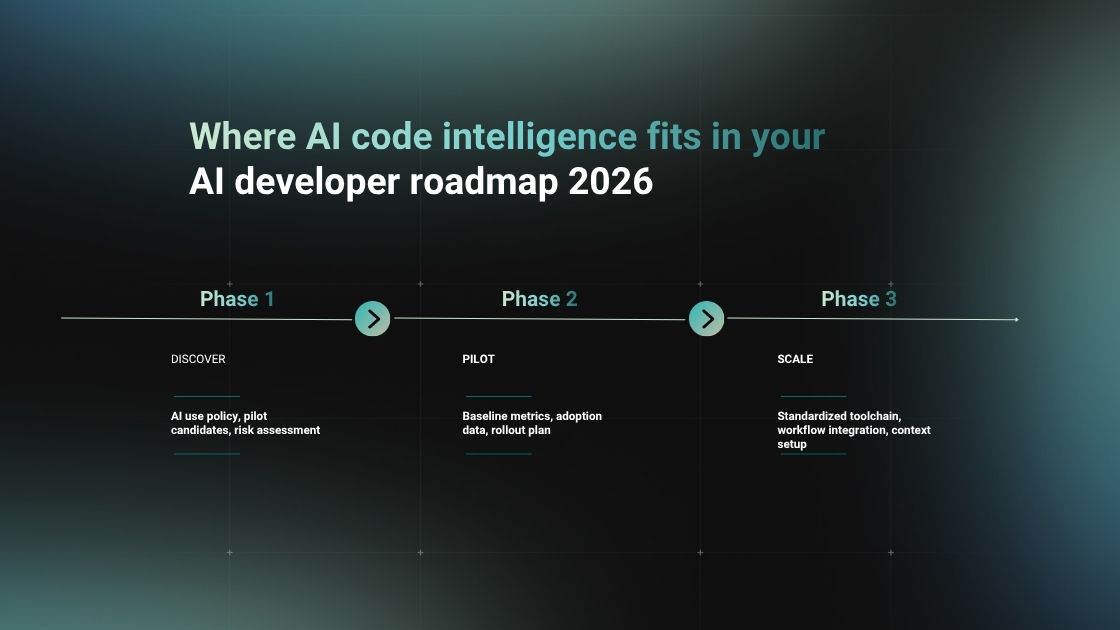

Faster path to Phase 3 expansion

Code generation tools face tough questions from security and legal teams. Code search tools face fewer objections because they produce no code that enters production.

This approach indexes your repositories on your on-premise servers, without ever publishing your IP outside of your organization and lets developers ask natural-language questions against company’s codebase. CodeQA follows that model and runs entirely on your infrastructure. That baseline ROI for developer tools in Phase 2 lays the groundwork for expanding into code generation in Phase 3.

ROI quantification: metrics for measuring AI code search assistants

The calculation above (search time × hourly rate × team size) is a starting point. But CFOs want a complete picture. To build a defensible AI ROI framework for tech teams, you also need to account for time lost to interruptions, rework, and duplicated effort.

The metrics competitors ignore

Most dashboards show how much code was written. But velocity depends on how much time was wasted.

Time saved on code discovery: Developers spend a noticeable part of their day working through code that already exists - checking prior implementations, tracing dependencies, and looking for similar solutions. This often adds up to around an hour per workday. Cutting that effort from roughly 60 minutes to 10 minutes per day recovers close to 200 hours per engineer over the course of a year.

This overhead tends to increase with seniority. Product Owners, Tech Leads, and CTOs operate across multiple projects, repositories, and architectural contexts. Without dedicated code intelligence tooling, moving through this knowledge layer becomes time-consuming and mentally demanding.

Reduced senior interruptions: Every "how does this authentication wrapper work?" question breaks a senior engineer's flow. An AI code search assistant acts as the first line of defense, deflecting queries before they reach your most expensive resources.

Faster onboarding: Measure time-to-first-PR. By giving new hires instant answers to architectural questions, onboarding time typically shrinks by 30-40%.

Fewer duplicated implementations: Semantic search reveals existing utility functions, stopping teams from rebuilding logic that already exists. This is a major source of long-term technical debt.

Sample before/after measurement model

This is the difference between testing AI in isolation and measuring its impact across your 2026 technology roadmap.

Generation and search - different value mechanics in AI-assisted development

The ROI examples above focused on code search, but they also highlight a practical difference between common AI tools. Code generation primarily affects how quickly new code is produced. AI code search influences how much time engineers spend working through the code that already exists.

Both mechanisms improve productivity, but they operate on different parts of daily development work. Generation tools speed up implementation. Code search tools reduce the effort required to find, inspect, and verify existing logic, dependencies, and patterns.

This becomes particularly visible in larger systems. Code generation increases output, which makes fast access to existing patterns and dependencies more important. Code search shortens the time needed to inspect how the system already behaves, making AI-assisted changes easier to validate and adapt.

For organizations operating under security or compliance requirements, this difference often influences how AI adoption unfolds. Improving codebase visibility and knowledge retrieval typically becomes an early step that supports broader use of generation tools later.

Conclusion

The future of AI developer tools in enterprise environments follows a pattern: organizations that start with low-risk, high-visibility tools build the metrics foundation and organizational trust needed for broader adoption. CodeQA fits this approach. It runs on your infrastructure, indexes your repositories locally, and produces no code that enters production.

Talk to us about a pilot.